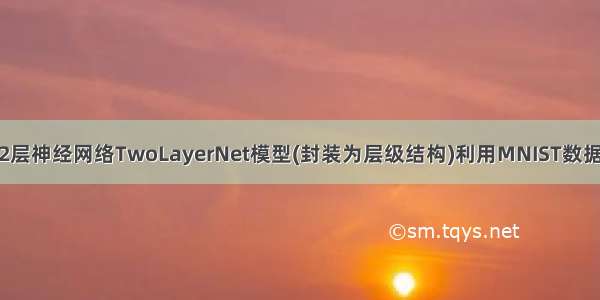

DL之DNN:自定义2层神经网络TwoLayerNet模型(封装为层级结构)利用MNIST数据集进行训练、GC对比

导读

神经网络算法封装为层级结构的作用。在神经网络算法中,通过将神经网络的组成元素实现为层,可以高效地计算梯度(反向传播法)。通过比较数值微分和误差反向传播法的结果,可以确认误差反向传播法的实现是否正确(梯度确认)。

目录

输出结果

设计思路

核心代码

代码实现过程错误记录

输出结果

设计思路

核心代码

class TwoLayerNet:def __init__(self, input_size, hidden_size, output_size, weight_init_std = 0.01):self.params = {}self.params['W1'] = weight_init_std * np.random.randn(input_size, hidden_size)self.params['b1'] = np.zeros(hidden_size)self.params['W2'] = weight_init_std * np.random.randn(hidden_size, output_size) self.params['b2'] = np.zeros(output_size)self.layers = OrderedDict()self.layers['Affine1'] = Affine(self.params['W1'], self.params['b1'])self.layers['Relu1'] = Relu()self.layers['Affine2'] = Affine(self.params['W2'], self.params['b2'])self.lastLayer = SoftmaxWithLoss()def predict(self, x):for layer in self.layers.values():x = layer.forward(x)return x# x:输入数据, t:监督数据def loss(self, x, t):y = self.predict(x)return self.lastLayer.forward(y, t)def accuracy(self, x, t):y = self.predict(x)y = np.argmax(y, axis=1)if t.ndim != 1 : t = np.argmax(t, axis=1)accuracy = np.sum(y == t) / float(x.shape[0])return accuracydef gradient(self, x, t):self.loss(x, t)dout = 1dout = self.lastLayer.backward(dout)layers = list(self.layers.values())layers.reverse()for layer in layers:dout = layer.backward(dout)grads = {}grads['W1'], grads['b1'] = self.layers['Affine1'].dW, self.layers['Affine1'].dbgrads['W2'], grads['b2'] = self.layers['Affine2'].dW, self.layers['Affine2'].dbreturn gradsnetwork_batch = TwoLayerNet(input_size=784, hidden_size=50, output_size=10)grad_numerical = network_batch.numerical_gradient_api(x_batch, t_batch) grad_backprop = network_batch.gradient(x_batch, t_batch)

代码实现过程错误记录

出现错误,待解决!!!

Traceback (most recent call last):

File "F:\File_Python\Python_daydayup\190316.py", line 281, in <module>

grad = network.gradient(x_batch, t_batch)

File "F:\File_Python\Python_daydayup\190316.py", line 222, in gradient

self.loss(x, t)

File "F:\File_Python\Python_daydayup\190316.py", line 193, in loss

return self.lastLayer.forward(y, t) #☆★☆★☆★☆★☆★☆★☆★☆★☆★☆★☆★☆★☆★☆ ------部分更改

File "F:\File_Python\Python_daydayup\190316.py", line 132, in forward

self.loss = cross_entropy_error(self.y, self.t)

File "F:\File_Python\Python_daydayup\190316.py", line 39, in cross_entropy_error

return -np.sum(np.log(y[np.arange(batch_size), t.astype('int64')] + 1e-7)) / batch_size#t.astype('int64')

IndexError: shape mismatch: indexing arrays could not be broadcast together with shapes (100,) (100,10)

相关文章

DL之DNN:自定义2层神经网络TwoLayerNet模型(层级结构更高效)算法对MNIST数据集进行训练、预测

如果觉得《DL之DNN:自定义2层神经网络TwoLayerNet模型(封装为层级结构)利用MNIST数据集进行训练 GC对比》对你有帮助,请点赞、收藏,并留下你的观点哦!